Flipbook feels less like just another AI product launch and more like a small revolt against the dead, rectangular boredom of the prototypical prompt-based AI interface. The project describes itself as an infinite visual browser generated on demand, in real time, where every page is an image and every click opens a deeper visual exploration of whatever caught your eye.

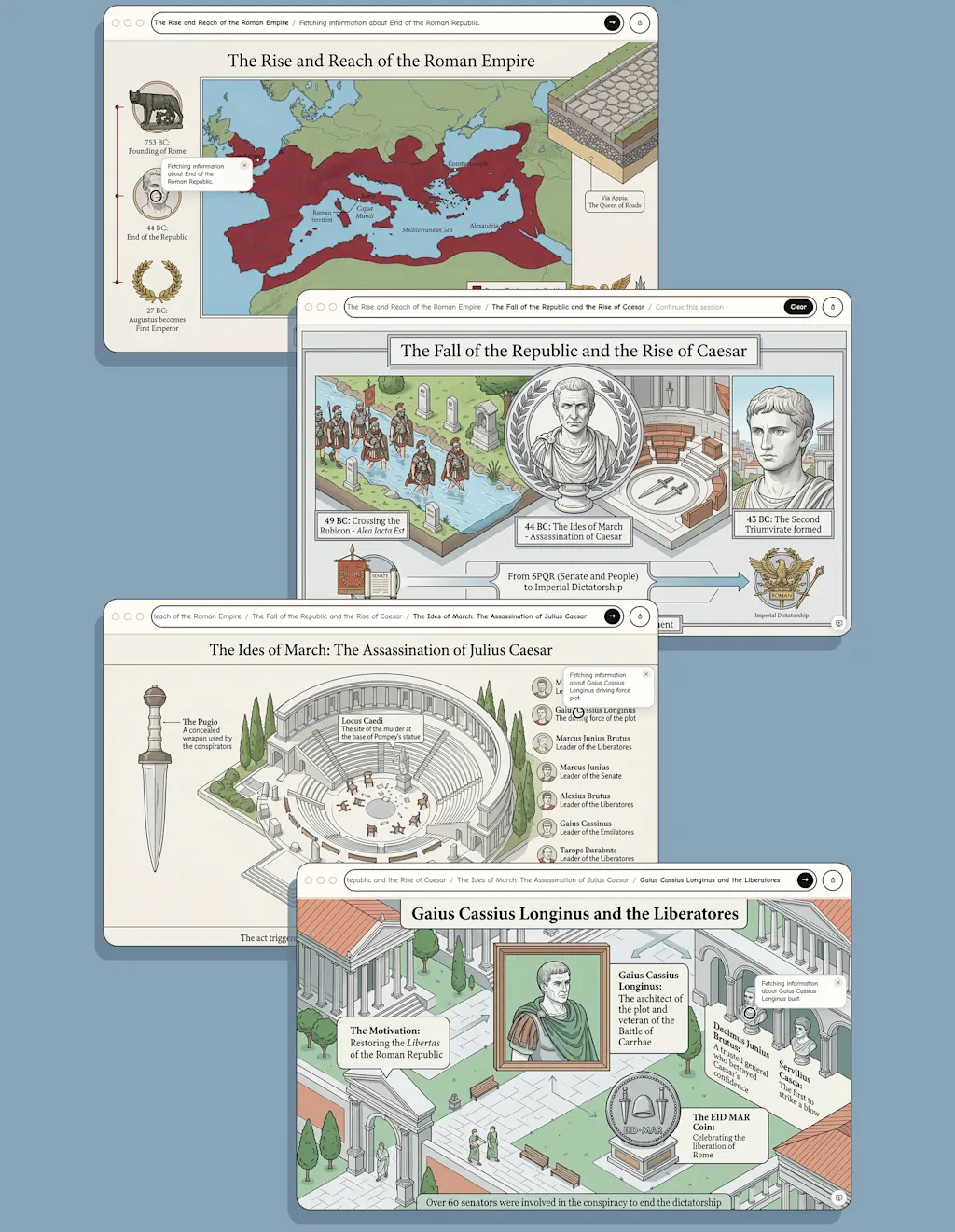

Rather than writing a prompt and receiving a torrent of text, with Flipbook you get information from a large language model turned into a beautifully illustrated “book” page that you can click on to drill deeper into a topic.

And oh boy, it feels fantastic to me. The idea is both fresh and familiar, and it makes me want to trash Gemini forever and make my entire web experience exactly like this.

What does Flipbook do?

What makes it exciting is not just the technology, but the interface philosophy behind it. Instead of forcing people to translate curiosity into a litany of text prompts, Flipbook lets them type into a browser-like search bar and then watch as that information appears as a beautiful illustration.

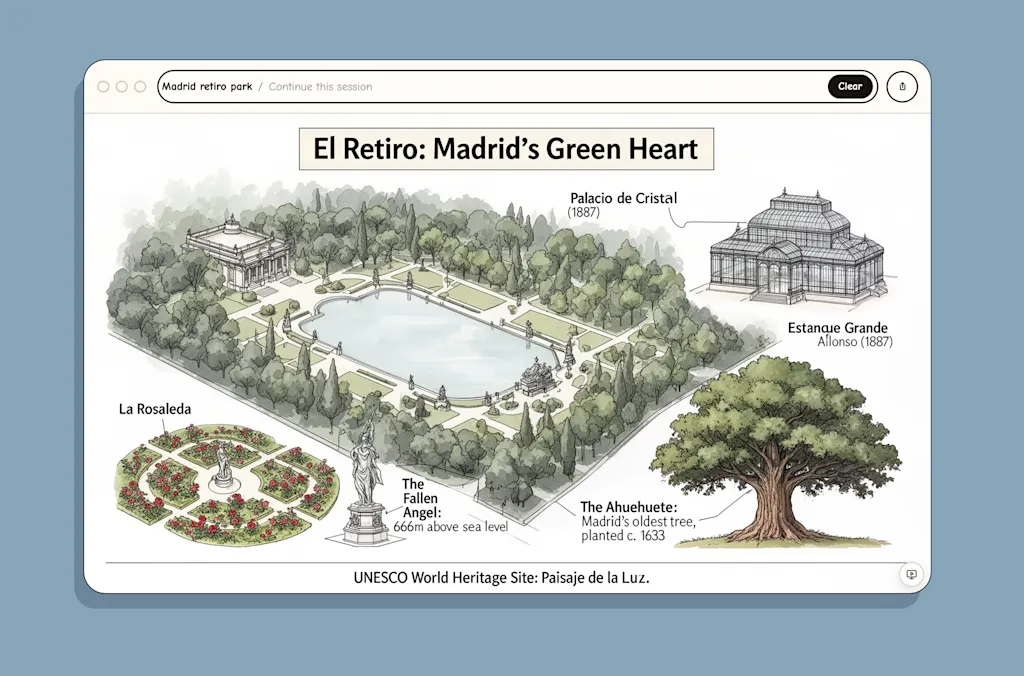

Whether you’re interested in learning about the Roman Empire or the romantic jewel of Parque del Retiro, in Madrid, you’ll get a depiction of the basics of that subject. (Warning: Because Flipbook is now a prototype in a small server, it is slow and doesn’t quite render responses in real time as intended).

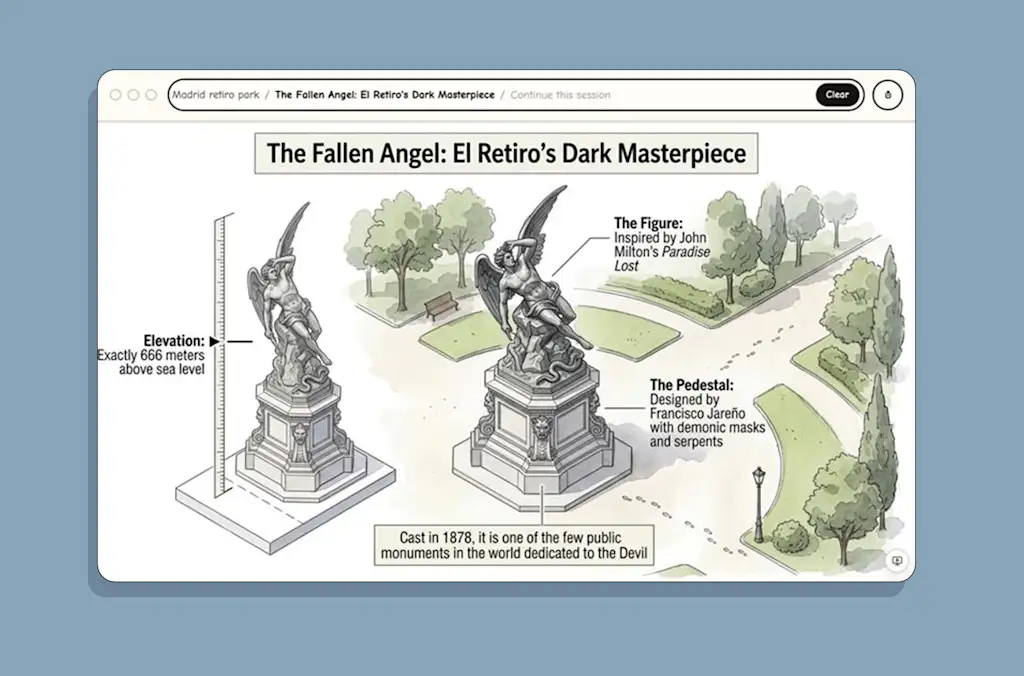

Once the basic subject has been displayed, you can click anywhere on the page and Flipbook will dive deeper, opening a new page of the “book” with dynamically generated illustrations and text.

Flipbook treats knowledge less like a database to be queried and more like a landscape to be explored. As the creators—Zain Shah, Eddie Jiao, and Drew Carr—brilliantly put it, the current paradigm of chat boxes and rigid layouts being sold as the future “felt like sipping an ocean of wisdom through a tiny straw.”

It feels like the closest thing you will get to an LLM becoming a tactile, analog experience this side of printing an actual illustrated book in real time.

It’s HyperCard colliding with AI

Flipbook immediately evokes Bill Atkinson’s HyperCard, the legendary 1987 Apple software that packed information into stacks of graphically linked visual cards. This precursor to the World Wide Web let people move through ideas by clicking on drawn buttons and regions of a bitmap screen. In HyperCard, you could draw a house, define the front door as a clickable zone, and link it to another card showing the living room. It was a masterpiece of interactive design, but it required a human to meticulously draw and link every single card.

Flipbook is the HyperCard dream fully realized by AI, capturing the same sensation of moving through knowledge spatially rather than linearly. When you click on a visual element—say, the engine of a car or a specific mountain in a landscape—there is no pre-authored card waiting for you.

The system analyzes the exact region you clicked, infers what you’re curious about, and hallucinates the next detailed card into existence in real time. It looks like the kind of beautifully illustrated stack HyperCard might have become if it had survived long enough to collide with generative AI.

The real break with the web is even stranger than that comparison suggests. By Flipbook’s own description, what you see contains no HTML at all, no conventional interface elements, and no text overlays. Even the text is rendered as pixels by the image model itself—just like HyperCard.

That means your computer isn’t downloading code and assembling a layout the way browsers have done for decades. Instead, the AI physically paints the page—text, buttons, and graphics—as a single cohesive image streamed directly to your screen. And if you click on the “animate” button in the lower-right corner, the drawing actually comes alive, moving dynamically as you zoom, with objects in motion or people populating landscapes.

Again, don’t expect this to work perfectly. In my experience, the animate button failed to connect, presumably because of the demand and the lack of server power. Shah was refreshingly blunt about the prototype’s underlying reality, calling it a “house of cards of APIs and open models tied together with duct tape.”

But the experimental live video stream mode feels brilliant when it works. According to Flipbook, the feature animates each image and creates seamless transitions between states, turning a slideshow into a continuous morphing journey. For that, the authors claim that its video component uses an LTX-based generation system alongside the image generation stack.

That matters because it hints at a future in which Flipbook may be animated all the time—not with static illustrated pages but fluid visual environments, which feels positively Harry Potter-esque.

The hallucinations

Of course, the magic comes with caveats. As its team describes it, the information in Flipbook comes from a combination of an “agentic web search” and the model’s own world knowledge. It basically seeks out information on the internet, looking into reliable sources, and puts things together. But like with any other LLM, inaccuracies can still happen in both the text and the drawing.

I saw this myself when drilling through Madrid’s Parque del Retiro, which showed an illustration that vaguely reminds you of the original but it’s clearly wrong. Or when I clicked to know more about the statue of the Fallen Angel—said to be the only statue in the world dedicated to Satan, built at 666 meters above sea level—it showed something that didn’t look anything like the Fallen Angel until I clicked on the statue and Flipbook opened a detailed page with pretty drawings of the real thing.

The authors claim, however, that you should expect the same level of accuracy of any other LLM. And as Shah points out, these underlying models will become more accurate, and the system will eventually support robust UIs and let you take real actions, like researching and booking a trip entirely inside the generated canvas.

Still, what matters here is not whether Flipbook is complete today. What matters is that it points to an AI future that is finally about experience instead of output, interface instead of an irritating chatbot. It feels a lot more human to enjoy the visual understanding instead of endless text generation. If the past three years of AI have been about asking machines to talk, Flipbook suggests the next phase may be about teaching them how to show things and to educate.

This spatial approach to data is why Flipbook may be the most compelling educational use of AI I’ve seen so far. Most chatbots are good at answering questions, but poor at building intuition. Flipbook seems designed for exactly that missing layer: not just telling you what something is, but showing you its context, its relationships, and the next question you didn’t know to ask.